Recent Posts

I am done. It's over.

Substack

4Chan bypass?

/emacs/ general

Forking jschan to submit a PR for captcha logic

This is Huge

PRICE OF INTERNET IN 1995

Motorola and Graphene linked up

HAPPENING

CPchads

JEEFICATION OF GSOC

Minecraft source code leaked

where do i get CRT monitor ??

Shifting to linux mint

Androidfags zara idhar aana

Sarvam is now proven to be a disappointment

XHDATA D-808 DX-ing setup, analogue modulation

i don't understand

RCE on Pocketbase possible?

AI Impact Summit 2026

Simple Linux General /slg/ - Useful Commands editi...

/wpg/ - Windows & Powershell General

Sarvam Apology Thread

Some cool tech in my college

the hmd touch 4g

Holy Shit

Saar american companies have best privacy saaar

/g/ related blogpost - backup thread

Android Hygiene

My favorite game rn

COOKED

Are we getting real 5g?

I want to create my own forum but I don't know how...

Is my psu not compatible with my mobo?

/i2pg/ - I2P general

24/l2w

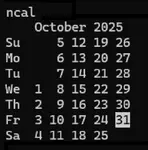

No.2983

How much electricity do you consume in your daily local model runs?

YMx7B4

No.2984

>>2983(OP)

I am gareeb, I don't have gpu and the one that I have in my laptop is gtx 1650 (which is good for general purpose, but not for running llm more that 2b).

yvCyyh

No.2985

>>2983(OP)

i dont use local llms as they are useless on my limited hardware

tGbf4Z

No.2986

> No GPU

> No life

tGbf4Z

No.2989

>>2983(OP)

I ran local models on my 13th i5. 65 watts peak. Ok for LLM use. Not fast. Image generation was not good.

I want to try to those new AMD AI cpus with npu's built into motherboard.

tGbf4Z

No.2990

>>2983(OP)

And while id love a 12 gb graphics कार्ड, thr costs even for a 3050 are high